A test for AGI is closer to being solved — but it may be flawed

A well-known test for artificial general intelligence (AGI) is closer to being solved. But the tests’s creators say this points to flaws in the test’s design, rather than a bonafide research breakthrough.

In 2019, Francois Chollet, a leading figure in the AI world, introduced the ARC-AGI benchmark, short for “Abstract and Reasoning Corpus for Artificial General Intelligence.” Designed to evaluate whether an AI system can efficiently acquire new skills outside the data it was trained on, ARC-AGI, Francois claims, remains the only AI test to measure progress towards general intelligence (although others have been proposed.)

Until this year, the best-performing AI could only solve just under a third of the tasks in ARC-AGI. Chollet blamed the industry’s focus on large language models (LLMs), which he believes aren’t capable of actual “reasoning.”

“LLMs struggle with generalization, due to being entirely reliant on memorization,” he said in a series of posts on X in February. “They break down on anything that wasn’t in the their training data.”

To Chollet’s point, LLMs are statistical machines. Trained on a lot of examples, they learn patterns in those examples to make predictions, like that “to whom” in an email typically precedes “it may concern.”

Chollet asserts that while LLMs might be capable of memorizing “reasoning patterns,” it’s unlikely that they can generate “new reasoning” based on novel situations. “If you need to be trained on many examples of a pattern, even if it’s implicit, in order to learn a reusable representation for it, you’re memorizing,” Chollet argued in another post.

To incentivize research beyond LLMs, in June, Chollet and Zapier co-founder Mike Knoop launched a $1 million competition to build open source AI capable of beating ARC-AGI. Out of 17,789 submissions, the best scored 55.5% — ~20% higher than 2023’s top scorer, albeit short of the 85%, “human-level” threshold required to win.

This doesn’t mean we’re ~20% closer to AGI, though, Knoop says.

Today we’re announcing the winners of ARC Prize 2024. We’re also publishing an extensive technical report on what we learned from the competition (link in the next tweet).

The state-of-the-art went from 33% to 55.5%, the largest single-year increase we’ve seen since 2020. The…

— François Chollet (@fchollet) December 6, 2024

In a blog post, Knoop said that many of the submissions to ARC-AGI have been able to “brute force” their way to a solution, suggesting that a “large fraction” of ARC-AGI tasks “[don’t] carry much useful signal towards general intelligence.”

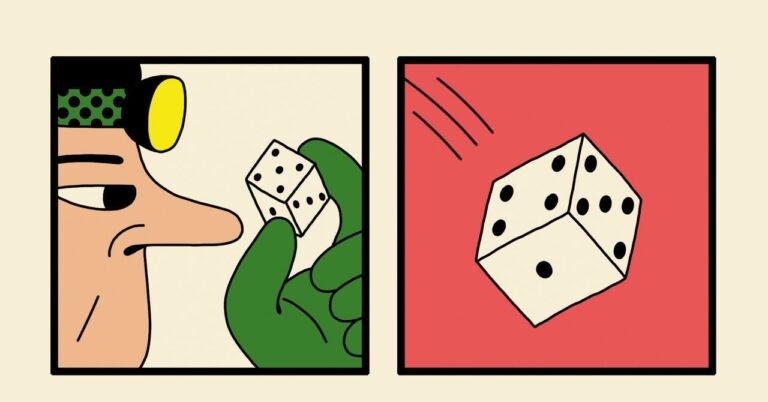

ARC-AGI consists of puzzle-like problems where an AI has to, given a grid of different-colored squares, generate the correct “answer” grid. The problems were designed to force an AI to adapt to new problems it hasn’t seen before. But it’s not clear they’re achieving this.

“[ARC-AGI] has been unchanged since 2019 and is not perfect,” Knoop acknowledged in his post.

Francois and Knoop have also faced criticism for overselling ARC-AGI as benchmark toward AGI — at a time when the very definition of AGI is being hotly contested. One OpenAI staff member recently claimed that AGI has “already” been achieved if one defines AGI as AI “better than most humans at most tasks.”

Knoop and Chollet say that they plan to release a second-gen ARC-AGI benchmark to address these issues, alongside a 2025 competition. “We will continue to direct the efforts of the research community towards what we see as the most important unsolved problems in AI, and accelerate the timeline to AGI,” Chollet wrote in an X post.

Fixes likely won’t come easy. If the first ARC-AGI test’s shortcomings are any indication, defining intelligence for AI will be as intractable — and inflammatory — as it has been for human beings.